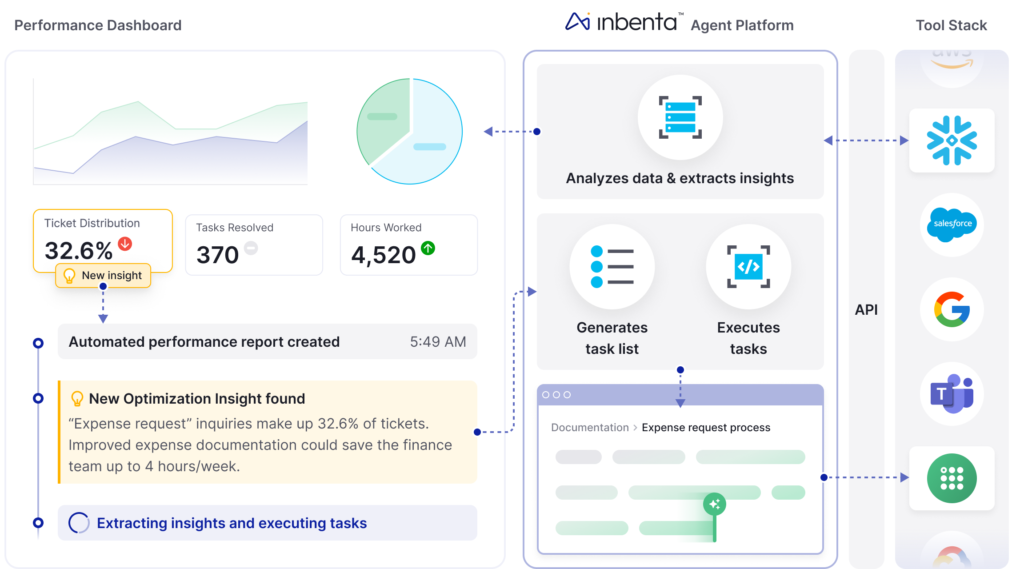

AI agents have moved far beyond simple productivity tools. What started as personal assistants for coding, chat, or task automation are now shared, organization-wide systems embedded deep inside daily operations. Across security, IT, engineering, and business teams, these agents increasingly coordinate work across multiple platforms and critical systems.

In many organizations, AI agents now handle tasks such as onboarding and offboarding employees, managing infrastructure changes, or resolving customer support issues. An HR-focused agent might automatically create or revoke accounts across identity systems, SaaS platforms, VPNs, and cloud environments when HR records change. A change management agent can review requests, update live configurations, log approvals in tools like ServiceNow, and update documentation in Confluence. Customer support agents often pull data from CRM and billing systems, trigger backend fixes, and update tickets without human intervention.

To work efficiently at scale, these organizational agents are typically granted far broader permissions than individual users. They need access to many tools, datasets, and systems in order to automate complex workflows. This design has delivered real benefits, including faster response times, reduced manual effort, and smoother operations. But it has also introduced a new and often overlooked access risk.

How Organizational AI Agents Change Access Models

Unlike individual users, organizational AI agents are shared resources. They are not tied to a single identity or role but instead serve many users and workflows through one implementation. To function continuously, these agents rely on shared service accounts, API keys, or OAuth tokens that are centrally managed and often long-lived.

To avoid breaking workflows, organizations frequently grant these agents wide-ranging permissions that span multiple systems and actions. While this approach improves reliability and convenience, it also creates powerful intermediaries that sit between users and sensitive systems, operating outside traditional permission boundaries.

When Automation Breaks Traditional Access Controls

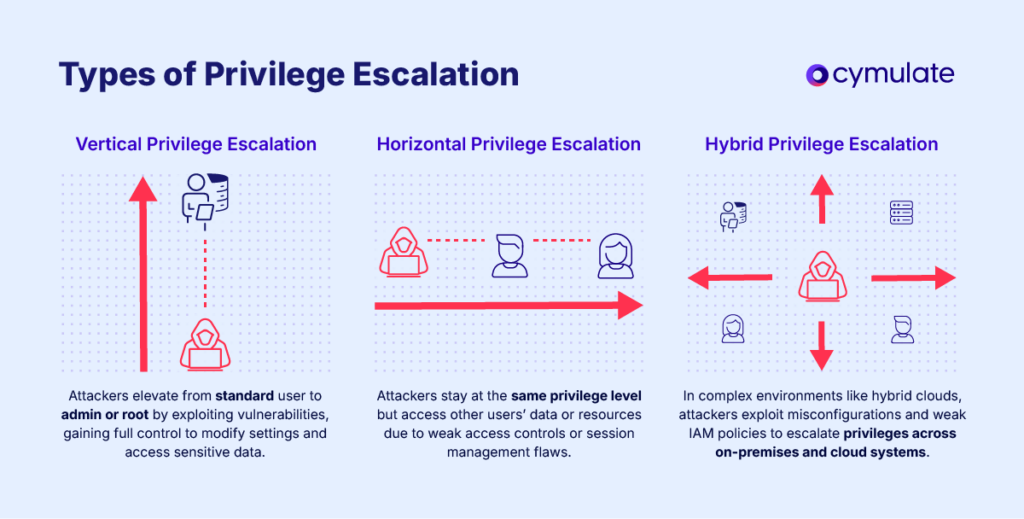

In a typical access control model, permissions are enforced at the user level. A user can only access what their role allows. AI agents disrupt this model. When a user makes a request through an agent, the action is executed under the agent’s identity, not the user’s.

This means a user with limited privileges can indirectly trigger actions or retrieve information they would not be allowed to access directly. Audit logs and system records usually show the agent as the actor, making it difficult to see who initiated the request or why it was allowed. As a result, privilege escalation can occur without obvious policy violations or alerts.

Subtle, Everyday Examples of Agent-Driven Risk

These risks often surface during normal workflows rather than obvious abuse. A user without access to financial systems might ask an AI agent to summarize customer performance. The agent, operating with broader permissions, pulls data from finance, billing, and CRM platforms and returns insights the user should not normally see.

In another case, an engineer without production access asks an agent to resolve a deployment issue. The agent reviews logs, updates production configurations, and restarts pipelines using its own elevated credentials. The user never directly touched production, but production systems were modified on their behalf.

In both situations, the agent is authorized, the request seems reasonable, and existing IAM rules are technically followed. Yet effective access controls are bypassed because authorization is evaluated at the agent level, not the user level.

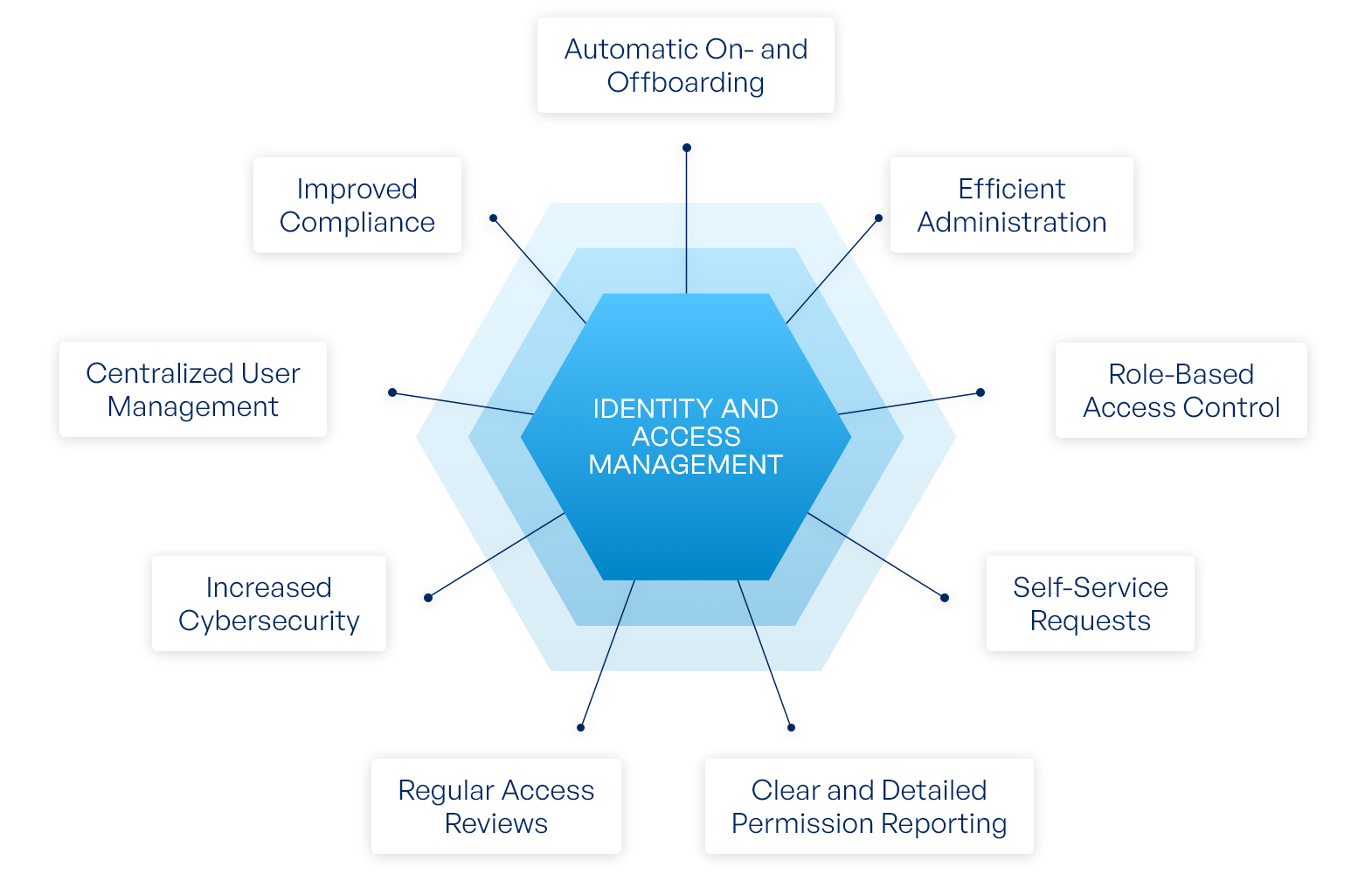

Why Traditional IAM Falls Short

Most identity and access management systems were designed around human users and direct system access. In agent-mediated workflows, that assumption no longer holds. Permissions are checked against the agent’s identity, not the request. Logging and auditing further complicate the issue by masking who initiated the action and what their intent was.

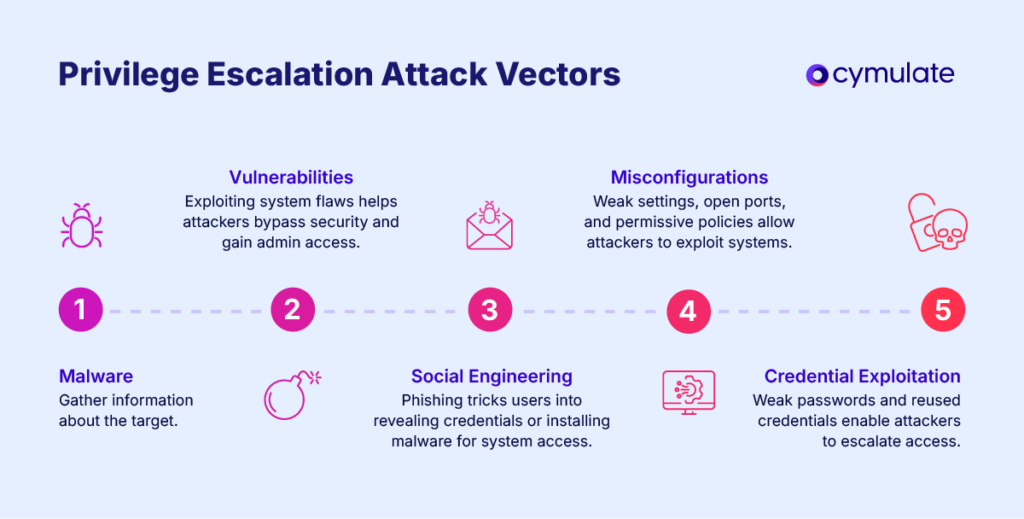

This loss of attribution makes it harder to enforce least privilege, detect misuse, or respond quickly during incidents. Security teams may not realize excessive access exists until it has already been exploited.

Gaining Visibility Into Agent-Centric Access

As AI agents take on more responsibility, organizations need clearer insight into how agent identities map to sensitive data and critical systems. Security teams must understand who is using each agent, what permissions the agent holds, and where gaps exist between user authorization and agent capabilities.

Permissions also change over time. Without continuous monitoring, new escalation paths can quietly appear as agents gain access to additional systems or as user roles evolve.

Securing AI Agent Adoption

AI agents are becoming some of the most powerful actors in the enterprise. Their ability to move across systems and act at machine speed makes them valuable, but also risky when overtrusted. Broad permissions, shared usage, and limited visibility can turn agents into hidden security blind spots.

Platforms like Wing Security aim to address this challenge by helping organizations discover active AI agents, understand what they can access, and correlate agent actions with user context. By identifying where agent permissions exceed user authorization, security teams can reduce risk without slowing down automation.

As AI-driven workflows continue to expand, organizations that invest in visibility and control will be better positioned to capture the benefits of AI agents without sacrificing accountability or security.