A new joint investigation from SentinelOne SentinelLABS and Censys is raising concerns about how open-source AI tools are being deployed around the world. Their findings show that AI hosting is quietly expanding into what they describe as a large, unmanaged and publicly accessible AI compute layer, spread across 175,000 unique Ollama hosts in 130 countries.

Unlike typical cloud platform deployments that come with security guardrails and default monitoring, these systems often operate outside standard security oversight, whether they’re running on cloud servers or even residential networks. Researchers say this creates a new kind of risk: AI infrastructure being exposed directly to the public internet without proper access control.

China Leads Exposure Numbers, but the Footprint Is Global

The report notes that the largest concentration of exposed hosts is found in China, representing a little over 30% of the total. However, the exposure is truly worldwide. Other countries with major numbers of hosts include the United States, Germany, France, South Korea, India, Russia, Singapore, Brazil, and the United Kingdom.

This wide distribution makes the issue harder to govern, especially because many systems aren’t running inside normal enterprise networks.

Why Ollama Matters

Ollama is an open-source framework that allows users to download and run large language models (LLMs) locally across Windows, macOS, and Linux. Normally, Ollama binds to localhost (127.0.0.1:11434) by default, meaning it should only be accessible on the machine where it’s installed.

But investigators warn that exposing it online can happen very easily. With just a simple change, users can configure Ollama to bind to 0.0.0.0 or another public interface, which can unintentionally make the service accessible to anyone on the internet.

Similar to threats posed by other locally hosted AI tools (including recently trending projects like Moltbot, formerly known as Clawdbot), this “local first” model means these systems may fall completely outside traditional enterprise security controls — creating blind spots.

Tool-Calling Turns AI From “Text Output” Into Action Execution

One of the most serious discoveries in the investigation is that nearly half of the exposed hosts appear to support tool-calling capabilities. This feature allows language models to do much more than generate text — they can:

- execute code

- call external APIs

- query databases

- interact with other systems

Researchers Gabriel Bernadett-Shapiro and Silas Cutler say this highlights a growing shift: LLMs are not just being used for chat, but are increasingly being integrated into real operational workflows.

Security experts warn that this changes the entire threat model. A normal text-only model might generate harmful or misleading content, but a tool-enabled model can actually perform actions—including privileged ones—if not properly secured.

In simple terms: a public AI endpoint with tool-calling and no authentication becomes a high-severity security risk.

More Risks: Vision, Reasoning, and Uncensored Templates

The investigation also found that some exposed systems support additional capabilities beyond basic text generation, including:

- reasoning features

- vision-enabled processing

Even more alarming, 201 hosts were identified as running uncensored prompt templates, which can remove built-in safety restrictions and make misuse easier.

The Real-World Threat: LLMjacking

These publicly exposed systems are increasingly at risk of LLMjacking, a form of abuse where attackers hijack someone else’s AI resources for profit. Instead of paying for their own infrastructure, criminals can use exposed endpoints to run AI workloads such as:

- spam generation

- disinformation campaigns

- crypto-related computation

- reselling AI access to other criminal groups

And importantly: the victim pays the bill, whether through bandwidth usage, compute consumption, or cloud costs.

Threat Actors Are Already Monetizing Exposed AI Endpoints

The risk isn’t hypothetical anymore.

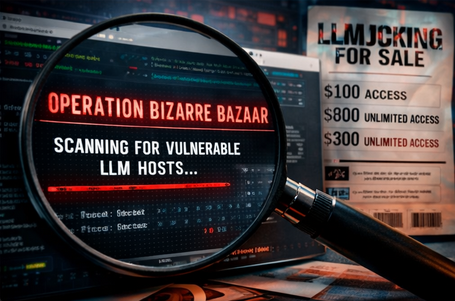

A separate report published this week by Pillar Security states that attackers are actively scanning for exposed LLM endpoints and monetizing them through an LLMjacking campaign called Operation Bizarre Bazaar.

That report outlines a structured operation with three major stages:

- Scanning the internet for exposed Ollama instances, vLLM servers, and OpenAI-compatible APIs that lack authentication

- Validating endpoints, checking quality and response behavior

- Selling discounted access through a service advertised on silver[.]inc, described as a Unified LLM API Gateway

Researchers say this is the first confirmed example of a complete “LLMjacking marketplace” where the full chain — from discovery to resale — is documented and attributed.

The campaign has been linked to a threat actor known as Hecker, also associated with the aliases Sakuya and LiveGamer101.

Why Defenders Should Pay Attention

A key concern highlighted across the reports is that exposed Ollama systems are highly decentralized. Many are distributed across cloud environments and residential IP space, making enforcement and policy control extremely difficult.

This kind of edge deployment also increases the likelihood of:

- prompt injection attacks

- malicious proxying of traffic through victim infrastructure

- governance and accountability gaps

Key Takeaway

The main message from researchers is clear: LLMs deployed at the edge must be secured like any other internet-facing infrastructure.

That means defenders should apply the same baseline protections they would use for public services, including:

- authentication (no unauthenticated endpoints)

- logging and monitoring

- network segmentation

- access restrictions / firewall rules

- endpoint discovery and exposure management

Because as LLMs become more connected to real operational tools, the impact of a compromise becomes far more dangerous than simple “bad text output.”