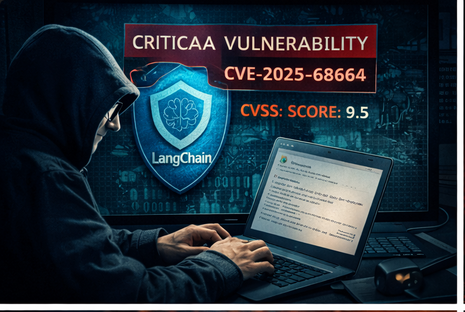

Critical LangChain Vulnerability Exposes AI Systems to Data Theft and Prompt Injection

A serious security flaw has been uncovered in LangChain Core, a widely used framework for building applications powered by large language models (LLMs). The vulnerability could allow attackers to steal sensitive data, manipulate AI responses, and potentially gain deeper system…